Region

CN

Browse Authors

Blogs

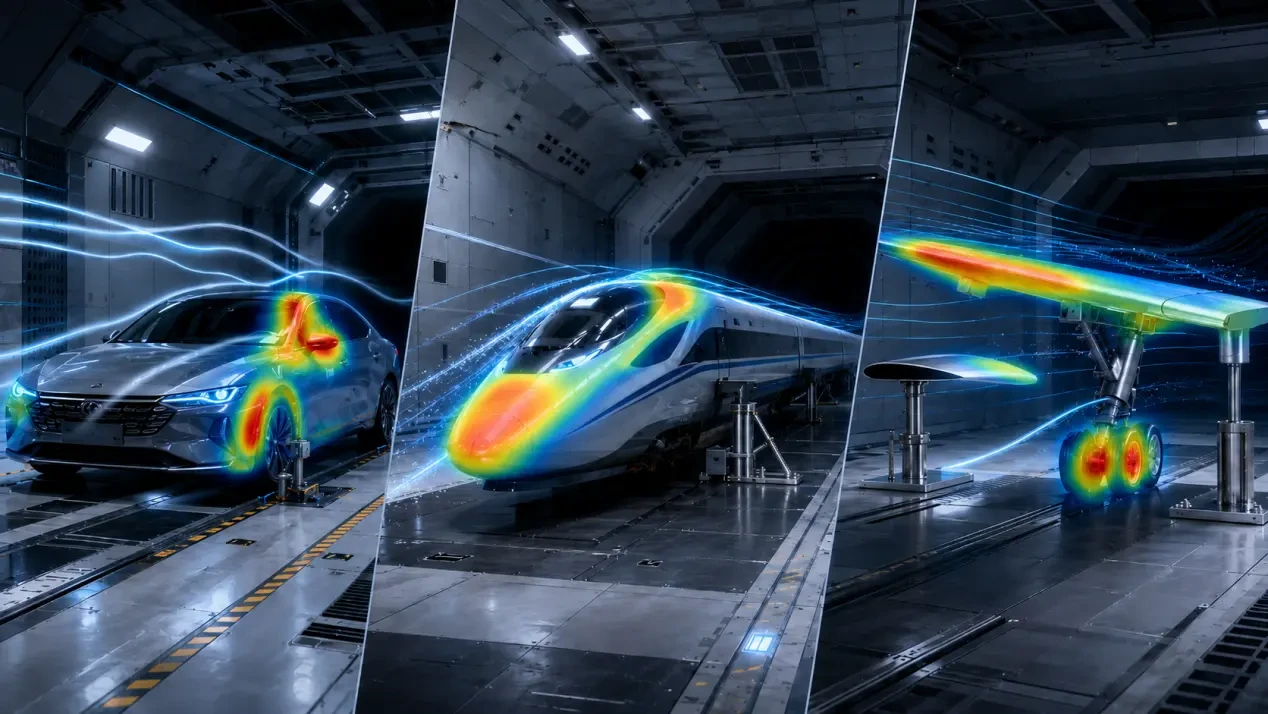

Wind tunnels were originally built to see the flow. Within a controlled test section, engineers direct airflow at a specified velocity over vehicles, wings, UAVs, rotor blades, or scale models. By analyzing pressure distributions, aerodynamic forces and moments, smoke visualization, Particle Image Velocimetry (PIV), and balance measurements, they can determine whether flow separation occurs, drag [...]

In today's rapidly evolving electroacoustic product landscape, production line testing systems are no longer expected to simply "produce measurements." They must balance test capability, throughput, stability, and scalability. Core metrics such as frequency response, distortion, and sweep analysis remain essential. At the same time, more advanced requirements, such as anomalous sound detection, multi-channel synchronization, automated [...]

A practical, repeatable workflow for production and lab teams-covering calibration, RF measurements, pass/fail limits, and automated reporting. Frequency Calibration and Bluetooth Performance Testing Test System: QuickRadio + MT8852B Bluetooth Tester QuickRadio provides a powerful platform that can test RF front-end interactions. QuickRadio supports multiple preconfigured sequence templates, making it easy to customize RF test workflows [...]

The audio quality of Bluetooth products is critical, yet traditional manual testing is inefficient and inconsistent. To address this, we leverage the secondary development capabilities of the CRY578 to deliver an efficient, programmable, and automated testing solution. Core Tool: CRY578 Interface Overview The CRY578 is a hardware interface specifically designed for audio testing. It functions [...]

In our previous blog post, Anomalous Sound Detection: From Human Ears to AI"we discussed the key pain points of manual listening, introduced CRYSOUND's AI-based anomalous sound testing solution, outlined the training approach at a high level, and showed how the system can be deployed on a TWS production line. In this post, we take the [...]

As A²B microphones and sensors are increasingly adopted in automotive applications, the demand for reliable testing in both R&D and production is also growing. This article explains why A²B testing matters, highlights the advantages of A²B over traditional analog cabling in terms of interconnect and scalability, outlines key measurement KPIs (such as frequency response, THD+N, [...]